Confirmation bias leads to the selective use of information

It is easy to obtain confirmations, or verifications, for nearly every theory – if we look for confirmations. – Karl Popper

We’ll start with the premise that it is much easier to confirm something than to refute it.

Why should this be so?

In general, acceptance of a belief is cognitively easier to maintain than skepticism about that belief, which requires deep cognitive elaboration, involving resource-intensive processing and integration of new information. For instance, it has been well documented that when our cognitive resources are depleted, we are more prone to persuasion.

Consider the POW experience described by Robert Jay Lifton:

You are annihilated, exhausted, you can’t control yourself, or remember what you said two minutes before. You feel that all is lost. From that moment the judge is the real master of you. You accept anything he says.

Under duress, we prefer to agree.

Cognitive load also affects us in less intense situation. As a result of our natural predisposition to reduce cognitive load, the mind has a tendency to to use a “positive test strategy” as a default heuristic when testing a hypothesis. That is, we tend to establish beliefs and then seek evidence that confirms those beliefs.

This effect is called “confirmation bias,” since we are biased towards a confirmation of our views.

Game: Four card task

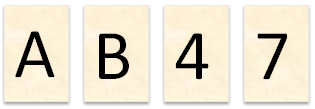

There are 4 cards on a desk and you are told that each card has a letter on one side and a number on the other. But you can only see one side. You are provided with a proposed rule: “If a card has a vowel on one side, then it has an even number on the other side.”

- Which cards would you need to turn over in order to determine whether this statement is false?

This is an experiment first designed by Wason (1966, 1969), who gave this question to 128 university students. In this study, most people choose the cards “A” and “4” (46% of participants).

If you are like the 46% who got this question wrong, your reasoning probably went as follows: “I understand the rule: a vowel on one side means an even number on the other. How can I show this rule holds?”

Next, you reasoned that if A has an even number on its other side, and 4 has a vowel on its other side, then you will have shown the statement to be “true.”

This all makes sense, but note that the question asked was whether the statement is false.

The A card is easy, since it can directly falsify the statement. If you flip the A, and you get an odd number, then the statement is false.

The 4 card, however, holds no information relevant to the question, since it cannot falsify the statement.

For example, if 4 card does not have vowel on the other side, we cannot say the statement is false: if the 4 card had, say, a D on the other side that D/4 combination is still consistent with the rule, as the D is not a vowel. The rule has not been violated.

Likewise, if 4 does have a vowel on the other side, we cannot say the statement is false in this case either, since this is also consistent with the rule, which holds. The rule has not been violated.

As Wason writes:

[This] implies that the need to establish the “truth” predominates over the instruction. An even number with a vowel makes the statement “true,” and hence the subject forgets presumably that an even number with a consonance couldn’t make it false.

Such bias, according to Nickerson (1998), “can be attributed to a logically based desire to seek only confirming evidence and to avoid potentially dis-confirming evidence.”

The A/7 card combination can falsify the statement, yet in Wason’s study, fewer than 10% identified this solution.

Westerwick and Meng (2009) did another interesting experiment with 156 participants. The study shows that on average, people spend 36% more reading time on online articles that are consistent with or favor their own attitudes and beliefs. As we will see, this tendency carries over into financial markets.

Applications in Finance:

In “Confirmation Bias, Overconfidence, and Investment Performance: Evidence from Stock Message Boards,” (a copy can be found here) by Park, Konana, Gu, Kumar and Ragahunathan, the authors demonstrate that users of online investing message boards and virtual communities tend to seek out information that is consistent with their beliefs, thus exhibiting confirmation bias.

The researchers looked at data from an online South Korean message board. First, investors answered questions related to a specific stock, including expected returns and an opinion (e.g., sell, hold, buy). Next they were asked to read five new messages posted on the site: 2 positive messages, 2 negatives and 1 neutral. Investors were asked to choose the message that 1) had the most widespread support, 2) was most strongly backed by news, and 3) had the most convincing argument.

The study found that investors tended to strongly prefer the messages that were consistent with their views.

When investors had a “Strong Sell” rating, they chose confirming bearish messages 60% of the time, versus disconfirming messages only 20% of the time. Clearly the bears had a bias for the bearish posts.

Think that’s bad?

When investors had a “Strong Buy” rating, they chose confirming bullish messages 78% of the time, and a disconfirming message only 10% of the time, displaying an incredible ~8X preference for the bull case! Making matters worse, these biased investors then became even more confident in their opinions, leading to excessive trading, and worse performance.

It was hypothesized that this tendency to prefer confirmatory evidence could be attributed to a desire to reduce cognitive dissonance, which might entail a higher cognitive load associated with the deep cognitive elaboration required to maintain it. Consistent with this, the data also suggest that the stronger the opinion, the stronger the tendency to ignore disconfirming evidence, and seek out confirmation.

What’s the lesson?

When you have a very high conviction idea, make an active effort to seek out disconfirming evidence in order to offset your tendency towards confirmation.

Reference:

Wason, P. C., & Johnson-Laird, P. N. (1972). Psychology of Reasoning: Structure and Content.Cambridge, MA: Harvard University Press.

About the Author: David Foulke

—

Important Disclosures

For informational and educational purposes only and should not be construed as specific investment, accounting, legal, or tax advice. Certain information is deemed to be reliable, but its accuracy and completeness cannot be guaranteed. Third party information may become outdated or otherwise superseded without notice. Neither the Securities and Exchange Commission (SEC) nor any other federal or state agency has approved, determined the accuracy, or confirmed the adequacy of this article.

The views and opinions expressed herein are those of the author and do not necessarily reflect the views of Alpha Architect, its affiliates or its employees. Our full disclosures are available here. Definitions of common statistics used in our analysis are available here (towards the bottom).

Join thousands of other readers and subscribe to our blog.