A paper, “Facts about Formulaic Value Investing,” is making the rounds and professes to plunge a dagger directly into the heart of systematic value investors. Half of my inbox is filled with questions regarding this paper, since we are considered by some — rightly or wrongly — to be “experts” on systematic value investing. The implication from the research piece is that systematic value investing can’t compete with a capable human stock picker because these approaches are too simplistic. This piece outlines our thoughts on this paper and systematic value investing approaches more broadly.

A PDF version of this is available here.

Quick Background

Systematic value investing is something I’ve thought about for a long time. We’ve written multiple academic papers on the subject and I dedicated a few years of my life writing an entire book on the subject, Quantitative Value (co-authored with my friend Toby Carlisle). To be clear, old-school value investing runs in my veins and I genuinely have respect for those who engage in the pursuit. I spent over 15 years doing old-school micro-cap fundamental and special situation stock-picking: I made a lot of money in the small-cap value bull market from 2002 – 2007 (“the genius maker market”), but also had the privilege of losing along the way. But I simply couldn’t get enough of stock-picking. I became a card-carrying member of the highly selective Valueinvestorsclub.com (VIC) and part of my dissertation (a modified published paper at the JFQA) involved reading every single stock pitch ever submitted to the VIC organization (~4,000) and analyzing these fundamental investors approaches and performance. I appreciate the art of stock picking and I think it is a valid approach for some investors. Just not for me. I came to the humbling — and depressing — conclusion that systematic decision-making was a more effective approach to investing (the USMC convinced me more so than the University of Chicago).

My final conclusion: I was essentially trying too hard.

Until Charlie Munger decides to hang out with me on a daily basis, I will continue to forgo discretionary stock-picking efforts and will defer to systematic investing approaches, not because this is my natural inclination (stock picking feels more natural), but because evidence and facts need to rule an investment process. (Interested readers can explore why I’ve taken this route here.)

Of course, “Facts about Formulaic Value Investing,” seems to suggest that simple systematic value strategies (e.g., sorting stocks on low P/E) are a waste of time and can only be improved via human involvement.

Here are the 2 takeaways from the paper:

- Simple value strategies don’t really deliver better performance (we’ll discuss this claim later in this post)

- “A capable analyst, however, should be able to significantly enhance quantitative approaches with Graham and Dodd-style security analysis.”

Unfortunately, “a capable analyst” is a vague term in the paper. Is this analyst 1) a human stock picker, or is this analyst 2) a more sophisticated algorithm?

A More Sophisticated Algorithm?

If the argument is that an algorithm might be able to build upon a basic book-to-market or earnings-to-price sort, well, we think the evidence supports this argument — the Quantitative Value algorithm is our effort to identify the most robust long-term focused algorithmic value investing approach we could devise.(1)

We also think the paper itself supports this argument, by highlighting how simple algorithmic changes (e.g., adding momentum) can enhance the performance of a basic book-to-market focused value strategy. Could these “enhancements” be vestiges of data-mining? Sure. Nobody can ever eliminate this possibility. In fact, if over the next 50 years a concentrated, simple, low P/E stock portfolio outperforms the vast majority of complex quantitative and fundamentals-based “value” managers, and the broader market, I would not be surprised. And I say this as a representative of a firm that builds and manages systematic value strategies that are decidedly more complex than a concentrated low P/E stock portfolio.(2)

A Human Stock Picker?

The authors sidestep the “more sophisticated algorithm” path and seem to imply that humans are required to deliver the enhanced benefits of more complex value investing, although they don’t say so explicitly in the paper. Nonetheless, a 2017 Business Insider article makes their stance more clear: U-Wen Kok (lead author on the study) states the following:

This paper emphasizes the importance of not just going with a quant screen or simple model…You need human insight.

If the argument is that a human value investing analyst can beat a more complex algorithm, we take issue with that claim. Sure, there will always be the human analyst with the magic touch (and whose good performance may or may not be attributable to luck), but we don’t believe there is substantial evidence to support this claim (the fact the S&P 500 Index — a systematic algorithm — beat 90%+ of all managers is a good data point). Moreover, a few years ago we compared the composite performance of all ValueinvestorsClub.com submissions (human value investors who apply Graham and Dodd-style security analysis) from March 1, 2000 to December 31, 2011 to our Quantitative Value Index (an algorithm that applies Graham and Dodd-style security analysis). Tough to glean too much from a short sample, but the algorithm still won. Chalk one up for the machines.

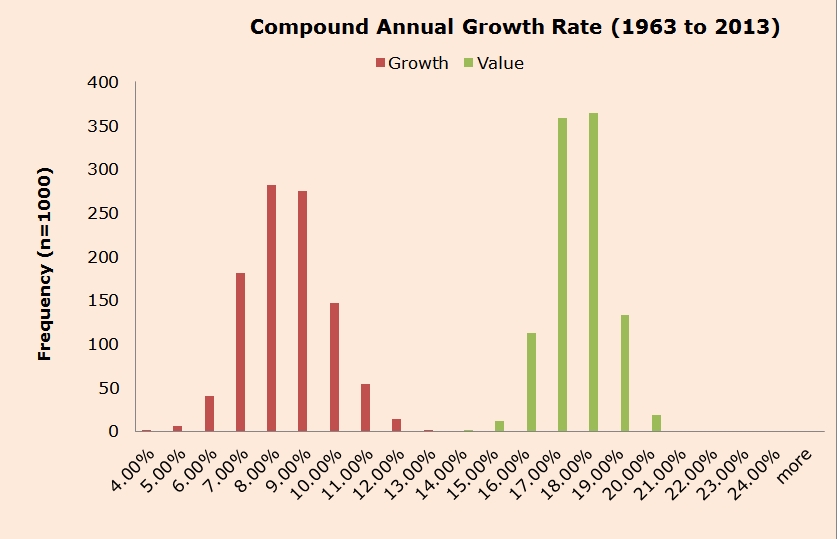

Moreover, we’ve run simulations on portfolios of the cheapest securities to assess the distribution of possible outcomes associated with simply buying “cheap” stocks. A key chart from the post highlights the outcomes associated with randomly choosing 30 cheap stocks from the top 100 cheap stock bin and displaying the possible distributions of outcomes (n=1,000). One can think of this exercise as “what is possible” for an army of 1,000 value investor randomly picking stocks among the cheap stock bin.

What the distribution highlights is that the range of outcomes for a cheap stock value investor are fairly narrow — 15%-20%. The distribution for expensive (“growth”) stock investors is wider, ranging from ~4%-13%. Arguably, humans (or improved algos) have a better shot at potentially adding value in the incredibly noisy growth stock investment arena, but value investors are somewhat limited in how much value they can add beyond a simple value-based stock screen. In short, an incredibly simple fundamental/price approach is pretty hard to beat, and any complexity will only add marginal value, regardless of whether this “value-add” comes via a more nuanced value algorithm or a human being sorting through 10-Ks. Within the cheapest decile, even the superstars — be they human or algorithmic — don’t perform much better than the average cheap-stock value investor.

Bottom line: This is a thought provoking paper on formulaic value investing, and we recommend everyone check it out. However, what follows is a rather detailed “reader’s guide” to interpreting and critically thinking about some of the paper’s claims. We go beyond our typical academic summaries because the topic of systematic (or formulaic) value investing is something that we find extremely interesting.

The paper is divided into 5 core sections:

- Brief history of value investing

- The performance of formulaic value investing

- What does formulaic value really identify?

- The interaction between formulaic value and momentum

- Quantifying the benefits of a more detailed fundamental analysis

We examine each of these in what follows…

Digging into the Paper

Introduction Section: Brief history of value investing

The paper starts off with a discussion on the “brief history of value investing.” The authors attempt to support their claim that value investing requires humans/complexity by evoking a quote from the original Graham and Dodd 1934 Security Analysis book that seems to imply that Ben Graham and David Dodd did not believe in simple value approaches based on fundamentals to price. This is somewhat confusing because later in life Graham explicitly stated the following advice:(3)

I am no longer an advocate of elaborate techniques of security analysis in order to find superior value opportunities. This was a rewarding activity, say, 40 years ago, when our textbook “Graham and Dodd” was first published; but the situation has changed a great deal since then. In the old days any well-trained security analyst could do a good professional job of selecting undervalued issues through detailed studies; but in the light of the enormous amount of research now being carried on, I doubt whether in most cases such extensive efforts will generate sufficiently superior selections to justify their cost. To that very limited extent I’m on the side of the “efficient market” school of thought now generally accepted by the professors.

Through the lens of today’s investment landscape, the quote seems to suggest that Graham was recommending a pure Bogle-esque “passive approach,” but this is not what Graham had in mind. In this same 1976 article, Graham goes on to support the idea of simple value investing. This seems to be the opposite of what the authors claim Graham supports. Specifically, Graham recommends that an investor create a portfolio of a minimum of 30 stocks meeting specific price-to-earnings criteria (below 10) and specific debt-to-equity criteria (below 50 percent) to give the “best odds statistically,” and then hold those stocks until they had returned 50 percent, or, if a stock hadn’t met that return objective by the “end of the second calendar year from the time of purchase, sell it regardless of price.” So much for elaborate techniques. Sounds like old Ben Graham does suggest investors follow simple systematic value systems.

Following the Graham discussion, the authors discuss the current state of systematic value investing. In particular, the authors claim that perhaps simple value methodologies may be overdone:

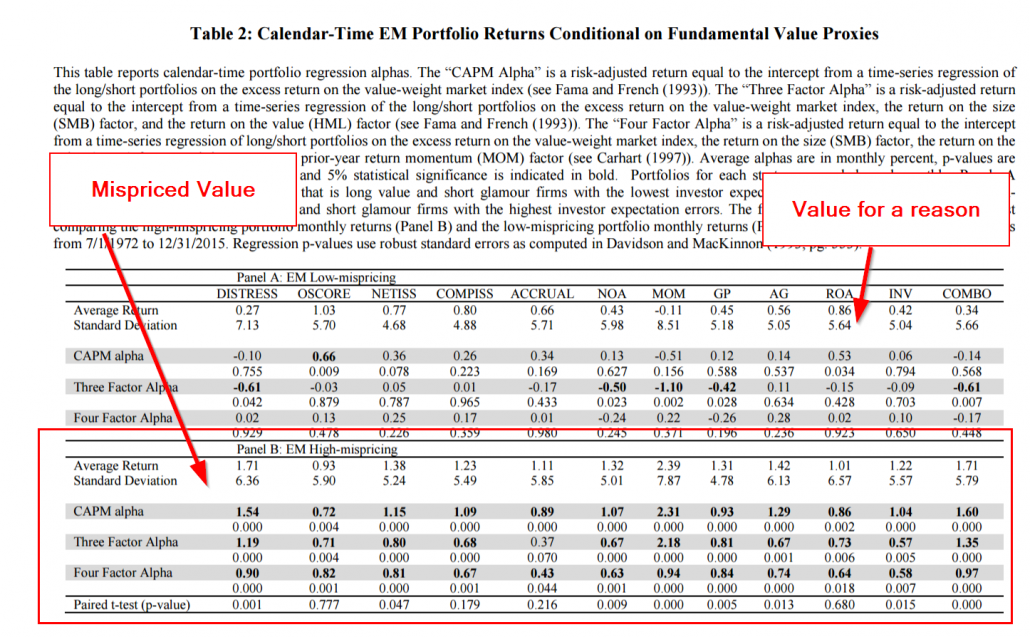

Today, such products [simple systematic value strategies] are ubiquitous.

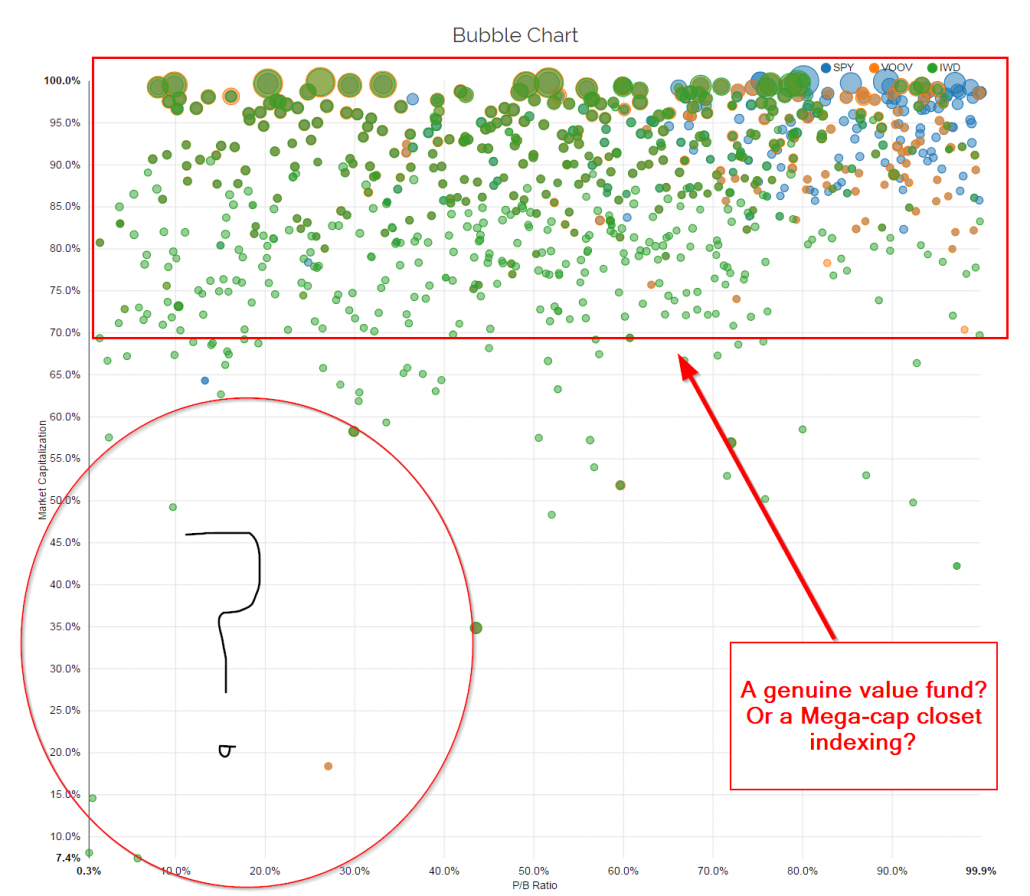

The authors cite the Vanguard Value Index Fund and the iShares Russell 1000 Value ETF as examples of so-called simple “value funds” with enormous asset bases. Let’s first examine the claim that these are value funds. If a simple value fund is the type outlined by Graham — concentrated and focused on the “cheapness” characteristic — there are actually very few value funds in the marketplace. Below is a graphic from our new visual active share tool, which allows an investor to visualize the holdings of these two “value” funds across two stock characteristics – cheapness and size. The X-axis maps all holdings on the simple price-to-earnings metric. The y-axis is market capitalization. (Note: price-to-book shows a similar pattern)(4)

A Graham-style investment portfolio would have ~30 dots clumped towards the left section of the chart, and probably sit across the market cap spectrum (likely more small/mid relative to mega-cap).

VOOV and IWD do not appear to be value investing funds at all. There is little relationship between their holdings and “cheapness.” The holdings seem to be spread out across the cheapness spectrum, with no clear characteristic tilt that would even capture the value premium. The only relationship that is painfully obvious is that these portfolios are strongly correlated with the “size” characteristic.

To claim that simple value investing based on fundamental/price ratios are “ubiquitous” doesn’t seem to be supported by an analysis of the construction of the specific portfolios the authors cite as examples, such as VOOV, IWD, or the DFA funds (we don’t have mutual fund data in visual active share, but the story would be the same at VOOV and IWD).(5) These so-called “value” funds are not value funds at all. If they were, we would see more evidence of that. Instead, these funds are mega-cap closet-indexing funds with holding characteristics that show no relationship to the value anomaly that Ben Graham would recognize as “systematic value investing.” In the author’s defense, these sort of funds do represent what many in today’s marketplace consider to be “value” funds, so it is reasonable to use them in their discussion. Our point is more of a rant on the state of what is appropriately defined as a “value” investing fund. Having the word “value” in your fund title doesn’t make you a value fund, at least based on how Ben Graham and generations of academics have defined value…

But let’s move on.

Section 1: The Performance of Formulaic Value Investing

The authors come out of the box making the claim that the evidence supporting the long-term performance of formulaic value investing is not very compelling.

Despite the current popularity of formulaic value-investing strategies, the evidence supporting the outperformance of formulaic value is not very compelling.

The authors cite a slew of excellent academic research articles discussing various aspects of the book-to-market characteristic and its relationship with stock returns. These papers focus on results associated with book-to-market based ratios because this is the most common ratio used in recent academic literature since Fama and French (1992). Interestingly enough, they point to Loughran (1997), which finds evidence that book-to-market may not be as effective as previously documents. This citation is used to support the claim that the “outperformance of formulaic value is not very compelling.” However, the authors forgot to include Loughran and Wellman (2009), which shows that the returns to formulaic value investing using enterprise multiples (our personal favorite valuation metric) generates much higher expected returns than book-to-market. In other words, formulaic value investing might still be compelling. But there’s more…

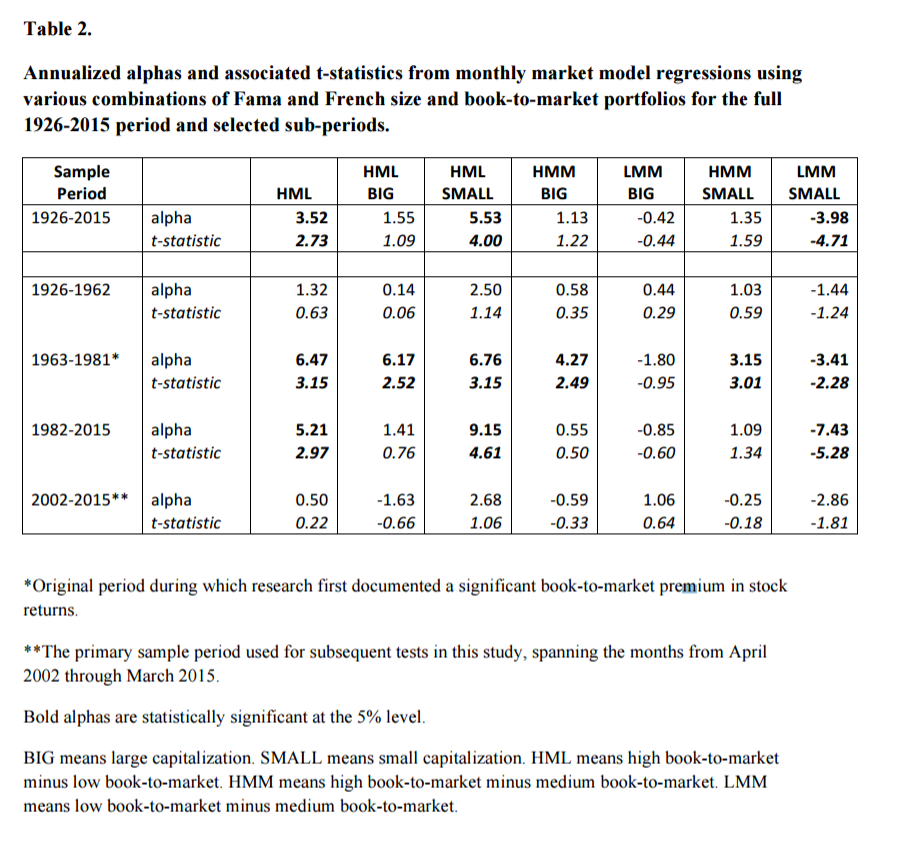

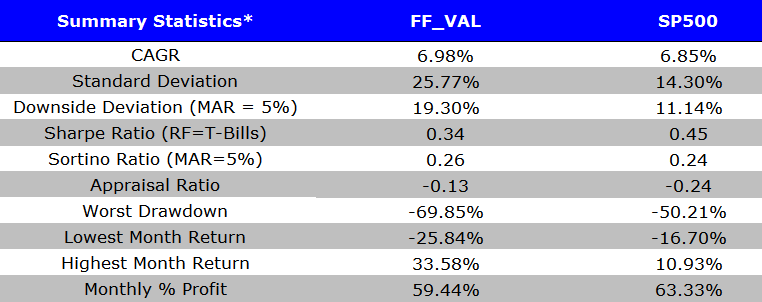

The authors highlight some results on various long/short value portfolios.

Here is the table from their paper:

The authors focus on “alphas,” which are generated from factor models, which we have discussed in detail as being more art and less science. Solid analysis and a great table, but there is a problem: Investors don’t invest in “alphas,” nor do they typically invest in long/short portfolios.(6) So let’s peel back some of the quant bullsh$% and look at some stats that better reflect the experience of most investors who may be considering an allocation of a significant portion of their portfolio in a valued-based long-only stock strategy.(7)

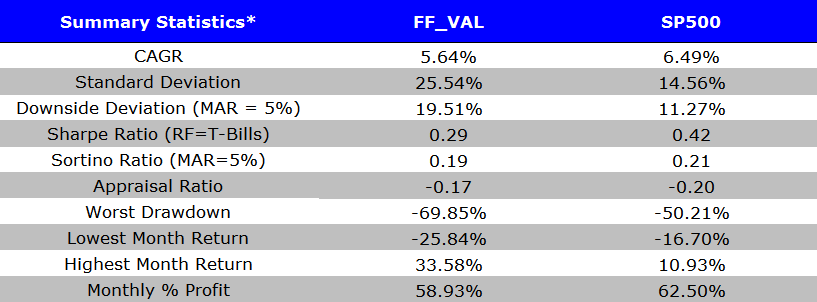

To accomplish our goal of assessing simple systematic value investing from the viewpoint of an actual investor (long-only strategic allocation), we look at the long-only top-decile book-to-market focused, annually rebalanced, value-weighted portfolio relative to the generic market portfolio (e.g., S&P 500). The data comes from Ken French’s website. The results are gross of fees and are total returns that includes dividends.

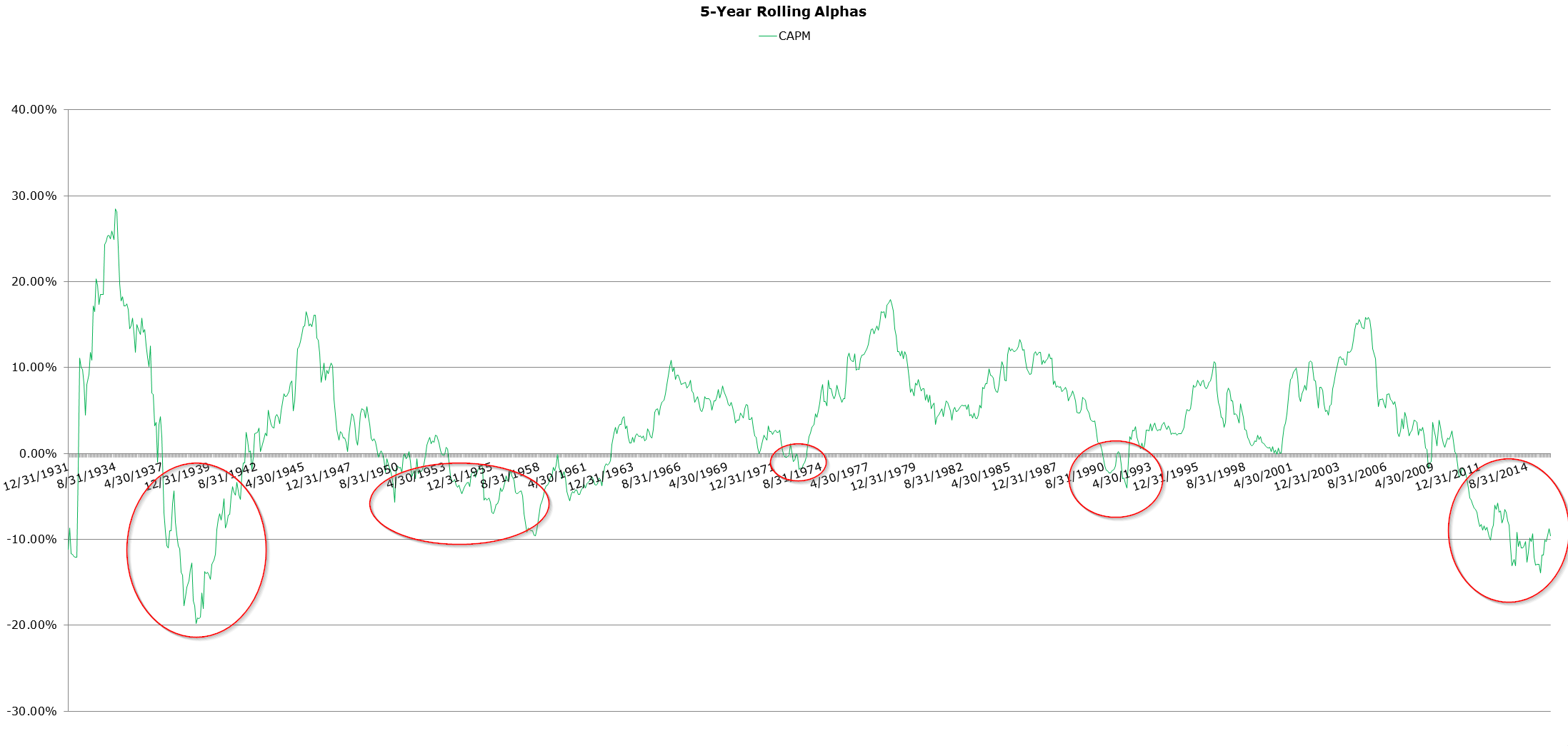

First, let’s look at a comprehensive view on long-only alphas over time using the simple “market model” to control for generic market exposure (similar to what is done in the paper’s “Table 2”). The alpha estimates shown are estimated over 5-year rolling look-back periods.

The long-term rolling history of alpha estimates associated with generic value strategies reflects the history of generic value investing: sometimes it works, sometimes it doesn’t. But given the ebbs and flows of value that occur over this longer time frame, the recent decrease in “alpha” doesn’t fit the narrative that somehow value has been “arbitraged away.”

How do we know this isn’t just another extended losing streak?

- Did investors in the 1930’s, with their incredible computing power, and army’s of CFAs decide it was time to arbitrage away the value premium? Clearly not. Or perhaps they got smarter in the 1950’s and decided to arbitrage value away at that point, because Graham wrote his book on value investing and it was becoming more popular? Not likely.

- Wait…wait…I know…investors read Charlie Ellis’s “Loser Game,” published in 1975, waited to act on it for 15 years, and then in the early 1990’s they decided that value was compelling and needed to be arbitraged away?

- Actually, I finally got the answer…what happened is that computers became so powerful in 2005, and everyone believed so strongly in the value premium, investors started exploiting the value anomaly to the full extent possible. Now we know why the value premium has sucked wind the past decade — soooo obvious.

The above is obviously tongue in cheek and shows via informal proof by contradiction that the narrative which states that value has been arbitraged away because the risk-adjusted performance has sucked the past few years, isn’t that compelling. Possible? Of course. Compelling? Not really. Suggesting that value is “dead” because value has been “arbitraged” away over the recent period highlights a fundamental misunderstanding of market efficiency and the limits of arbitrage. Value “works” precisely because it’s arguably more risky, suffers unpredictable bouts of underperformance, and delegated asset managers can’t handle owning tragic stories that are unlikely to be resolved anytime soon (and could thus cause large benchmark tracking error problems). If value were permanently “arbitraged” away, it would actually be incredibly puzzling because the implication of that hypothesis is that investors have dramatically changed their risk preferences and/or short-term performance chasing no longer influences manager behavior. Remotely possible, but not plausible.

All that said, can the average value premium decrease over time? Sure. Can that premium be highly volatile over long periods of time? Certainly. Will that premium go to zero and/or become negative? Unlikely, unless you believe risk preferences have changed and/or you believe human nature and institutional incentives to abide by the short-term horizon imperative have changed.(8)

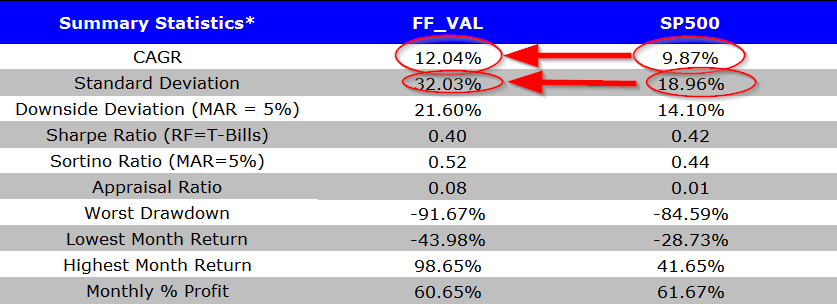

But I digress…I’ll shut up now and we’ll look at some more data. Specifically, let’s examine the long-only generic B/M value portfolio over various periods (denoted FF_VAL, or “Fama and French Value”):

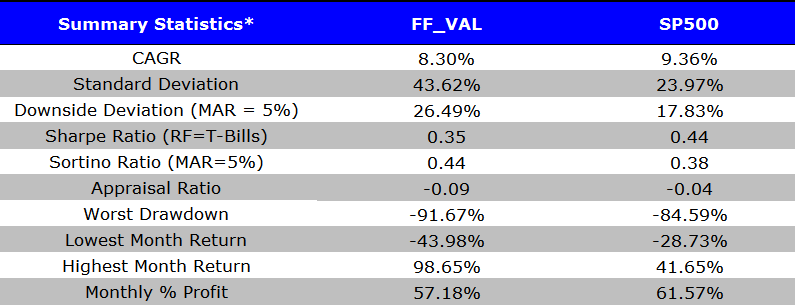

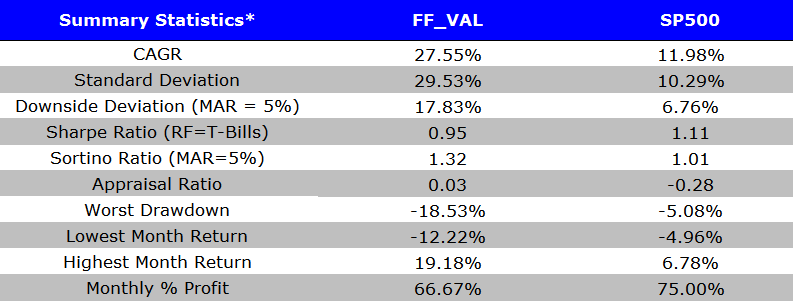

1927 to 2015: A good run for value, but with extra risk.

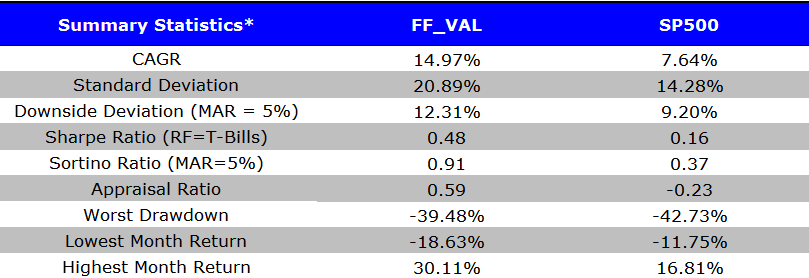

1927 to 1962: A bad run for value

1963 to 1981: An epic run for value

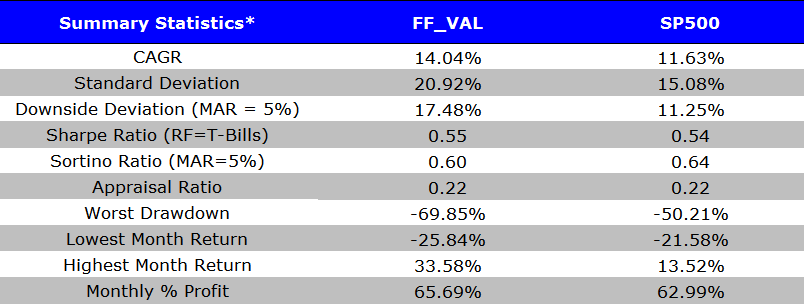

1982 to 2015: Solid run for value

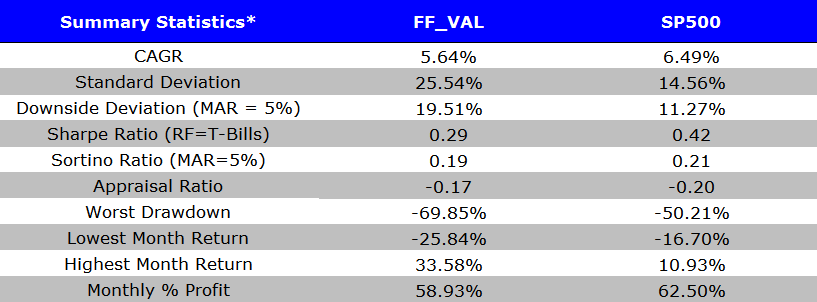

2002 to 2015: A bad run for value

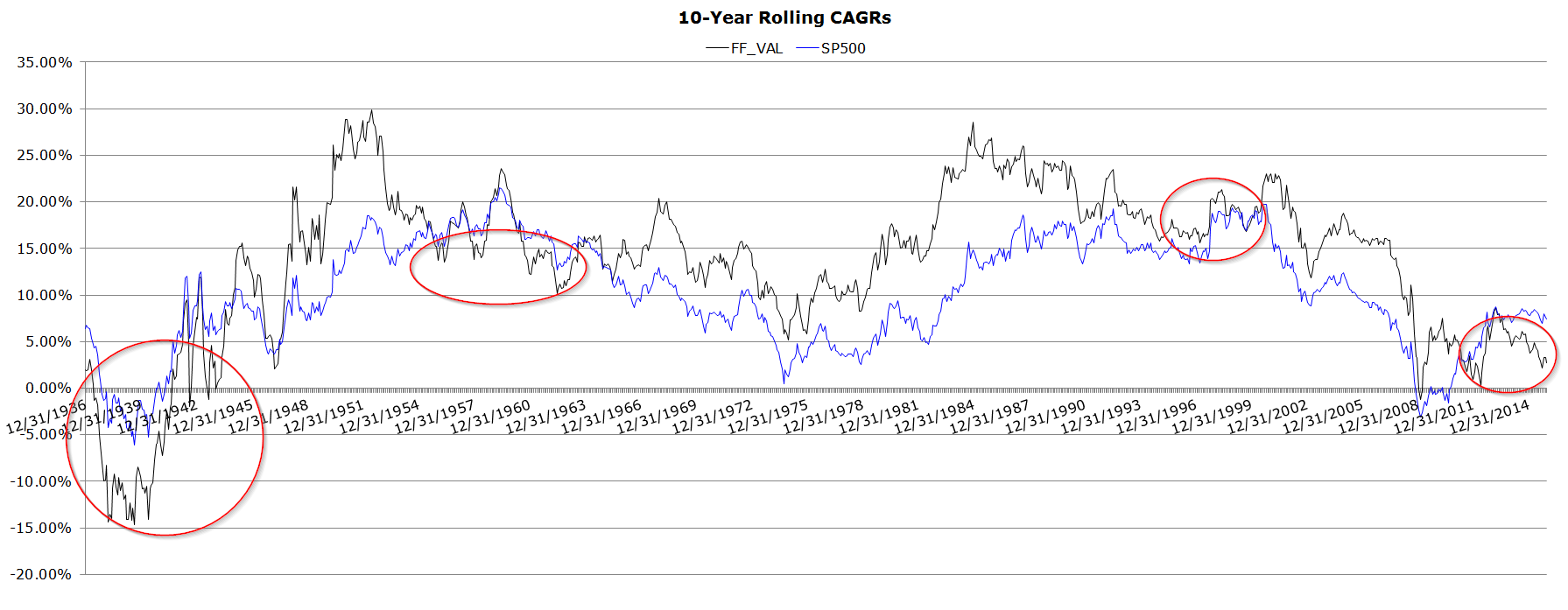

The tables above, which closely mirror the time periods outlined in the paper, show that value has long stretches of relative performance that can either be favorable or unfavorable relative to a passive equity index. However, each period chosen could be misleading and can be used to tell/promote a specific story. A more transparent view on simple value’s performance over time is perhaps best viewed via a 10-year rolling CAGR chart. The visualization avoids the risk that the time periods picked above were chosen to support a particular narrative — now the reader can see all possible 10-year performance periods relative to generic beta. The rolling lookback creates an ongoing series of windows on performance, each of which tells its own particular story. So there’s no way to cherry pick some particular window you happen to like or not like. The reader can view the 10-year relative performance over all possible 10-year periods throughout time. Some of the famous bad value investing runs are highlighted in red circles.

There are clearly times when value has a tough time relative to the benchmark, but in general, there are higher historical returns associated with value strategies (FF_VAL wins approximately 75% of the time over all possible 10-year rolling periods). Perhaps this outperformance is compensation for additional risk (the DFA story), or perhaps there are elements of systematic mispricing (the LSV story). The truth is that the additional returns are probably associated with a mixture of both — higher risk and an element of highly volatile mispricing. The AQR crew say it best: “Our best guess is that both risk and behavioral causes are at work.”(9)

The other empirical observation is that the recent underperformance of the generic B/M value premium is not unique to history. At all. In fact, it’s quite common. When it comes to pain, value strategies have, “Been there done that.”

In summary, there is weak evidence (at best) that the simplistic B/M value investing approach is completely ineffective (at least viewed from a long-only perspective and when considered over a reasonably long time frame).(10) Moreover, this analysis looks at book-to-market (B/M), which the authors of the paper under discussion examine in great detail. We agree B/M isn’t the greatest valuation metric out there: the evidence suggests that the “best” simple value investing approach is sorting on enterprise multiples: see here, here, here, here, and here for examples. But in defense of B/M, and implicitly Dimensional Fund Advisors (DFA), a firm that we hold in high regard, making the claim that a dead simple B/M approach isn’t effective might be a stretch.(11) This may be especially true if one considers turnover, taxes, and scalability issues that might plague more complex systematic value systems.

To be clear, we aren’t here to sell the world on the glory of B/M, in fact, we often tell the world it’s not that great, but we do think it is also fair to defend the ratio as being a reasonable approach to consider when exploiting the value premium.(12)

That said, the authors are correct in pointing out that simple value investing approaches are not a panacea. This is especially true for B/M, which has all kinds of issues to include increased risks, the potential for epic stretches of underperformance, the benefits are limited to non-mega-cap stocks, and so forth.(13)

Section 2: What Does Formulaic Value Really Identify?

This section starts with the following premise:

If investing on the basis of fundamental-to-price ratios does not identify underpriced securities, what does it identify?

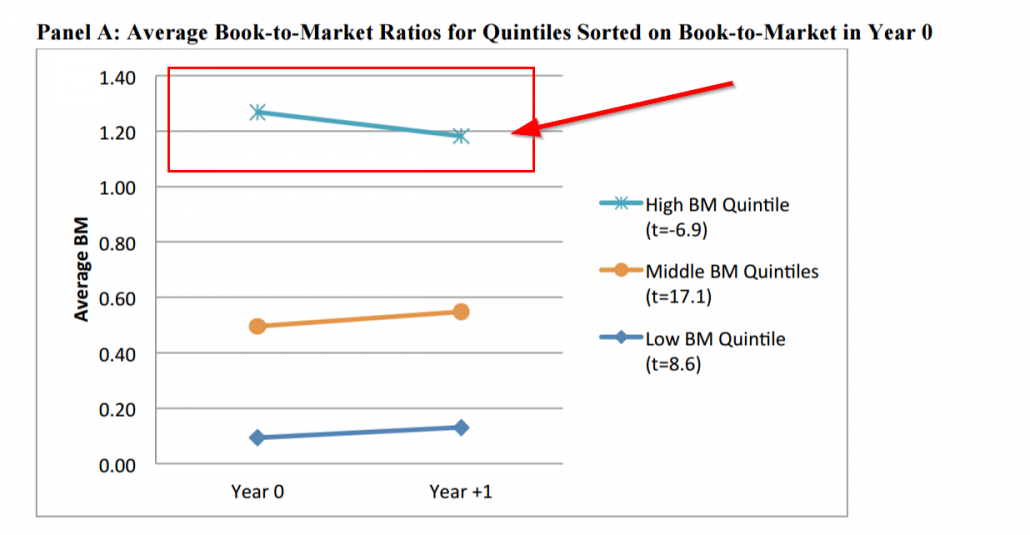

First, the authors highlight that over the time period they analyze (2002 to 2014) there seems to a mean-reversion in fundamental/price ratios year over year. The chart below highlights that the average B/M for stocks in the cheapest B/M quintile drifts lower over time.

A fundamental to price ratio includes 2 components: 1) a fundamental and 2) a price. Let’s say the fundamental = book value, while the price = market capitalization, B/M. In order for B/M to decrease, either book market decreases or the market cap increases.

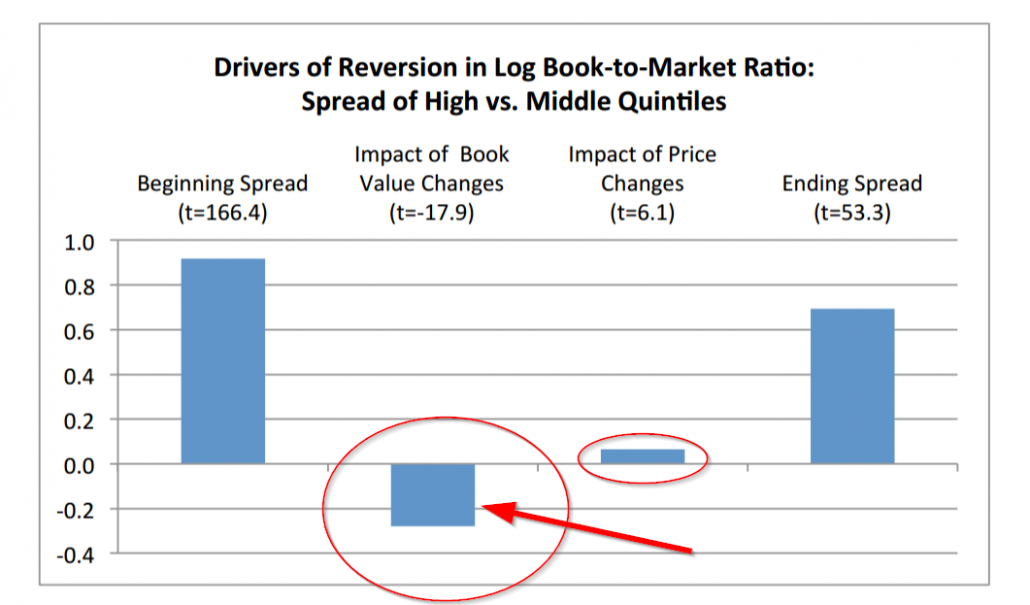

To identify why high B/M firms mean revert in this sample period, the authors conduct a formal decomposition of the beginning B/Ms and ending B/Ms, which breaks the B/M transition into three elements: 1) book value movement, 2) market cap movement, and 3) the status quo. The analysis is really interesting and I commend the authors on providing excellent visuals to explain the idea. Well done!

The chart below is an example of the analysis which shows that over the short sample period (2002-2014) that book market deterioration drives the mean reversion, not increases in prices.

The authors claim that their evidence supports the hypothesis that bad news was essentially priced correctly. That is, the cheap B/M firms were cheap, on average, because they were businesses with deteriorating fundamentals as reflected in their book values that went on to decline (cheap for a reason!), not businesses that were temporarily mispriced. If there were mispricing, the price change component would arguably be a lot more positive. This claim is correct based on the sample analyzed, however, it would be nice to see more details/robustness and a discussion regarding the limitations associated with a short sample period.

Here are a few things that popped into my head:

The data are incredibly noisy and may be picking up a size effect, not necessarily a value effect

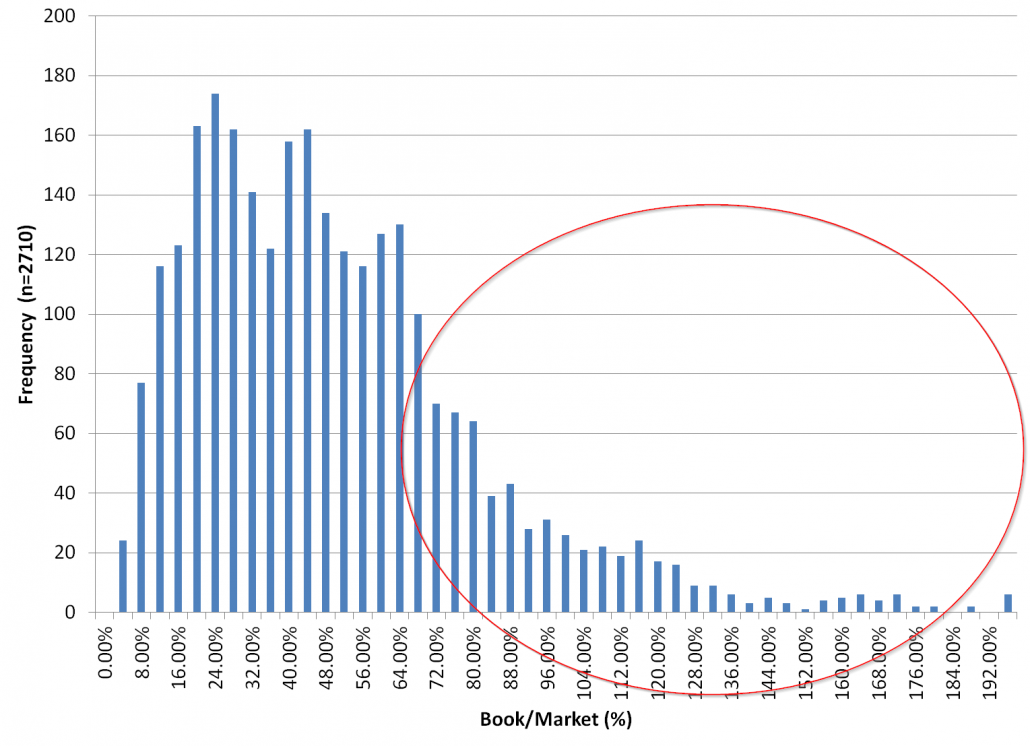

The authors look at the R3K universe, windsorize at the 1% level to eliminate “outliers,” and then take averages. This is definitely reasonable, but below is a chart of the current histogram of B/M and Market Cap for R3K constituents with a value for B/M and winsorized at the 1% level.

B/M

Note the incredible skew in B/M ratios. But that’s why researchers look at the log of B/M. The log-normal form is a monotonic transformation that better approximates the distribution, and simplifies interpretation. All good, but still important to note when looking at results associated with “averages,” especially when the average is on the change in log B/Ms.

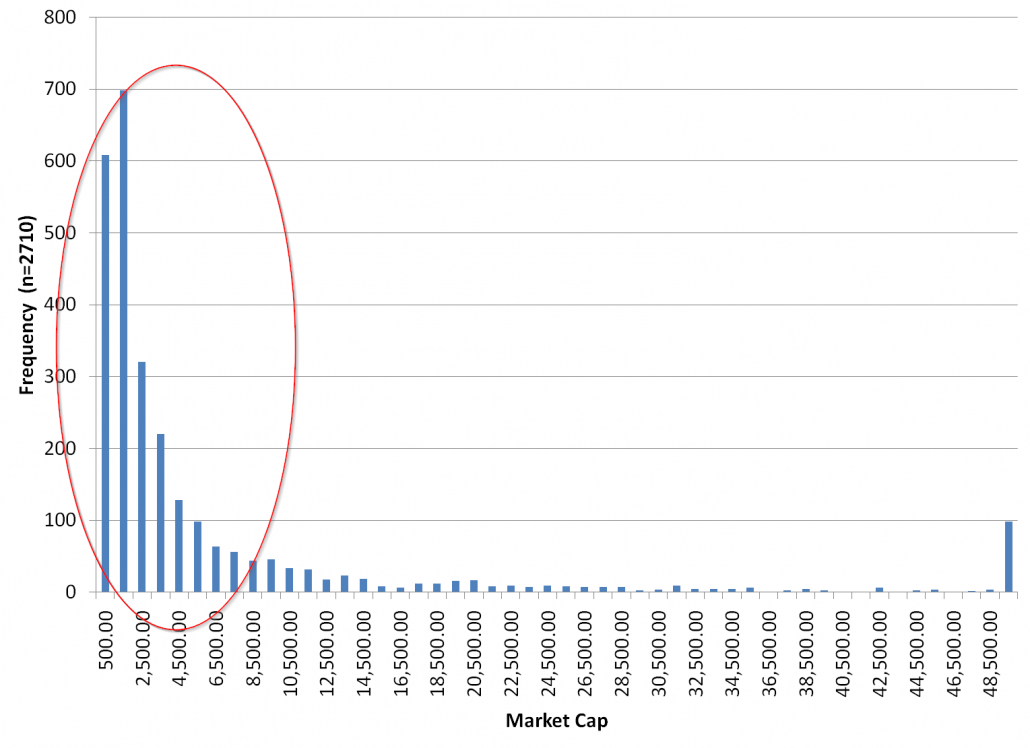

Market Cap

Note that the vast majority of the observations are associated with small/mid caps, but the bulk of the market value is associated with large/mega-caps. For example, the top 10% largest names in the universe represent 73% of the universe’s market value.

The observation that market caps are highly skewed is potentially important for interpreting the results. The vast majority of the observations in the study are associated with the smallest stocks in the market, even though these observations represent only 27% of the universe’s market cap. So any takeaway from the analysis is a statement about small cap stocks and not necessarily a statement on the stock market as a whole. It would be incredibly helpful if the authors did a decomposition of the “average” results on the size dimension. This analysis may actually help their story as well.

Is this really a story about Financials and the 2008 Financial Crisis?

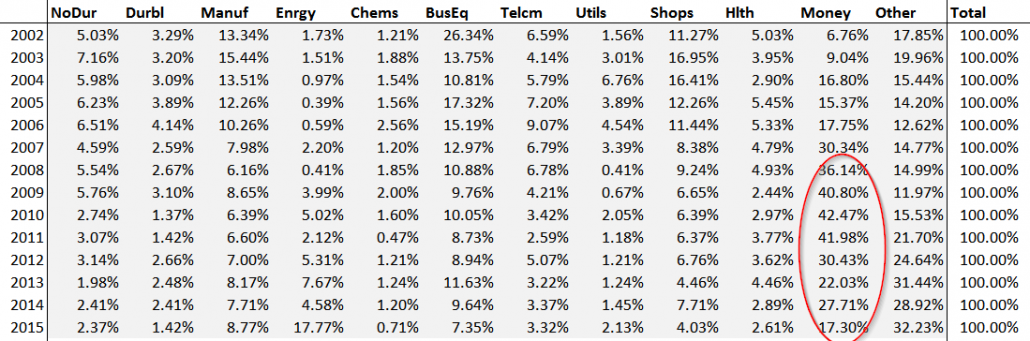

Another aspect that requires further investigation is the unique industry exposures B/M strategies experienced during this short sample period. Here is a breakdown of the industry exposure for the top quintile of B/M stocks from 2002 to 2015.

What do you notice?

As for me, I spotted the extreme loading in financials around the Financial Crisis. Hmm. So are the results in this paper really a story about simple value investing or an analysis of the well-established fact that simple value got crushed during the Financial Crisis — especially the financial sector! The authors should provide additional insight into this extreme data point and ascertain if this drives the decomposition results.

The time horizon is really short — 2002 to 2015.

It is tough to be confident in conclusions, from a statistical standpoint, with so few independent observations — especially given the unique characteristics (arguably) of the sample period assessed. Perhaps in these periods the expected junk stocks with busted business plans actually didn’t pan out. Perhaps investors bought risk and the risk didn’t pay out. Or perhaps there was mispricing, and the ex-ante bet that the prices were too cheap relative to fundamentals was an edged bet, but in this short sample the “edge” wasn’t realized. The empirical tests the authors design are pretty clever, but without more out of sample testing it would be odd to shift to embrace the “simple value is dead” hypothesis, based on the known empirical fact that cheap stock strategies have historically gone through long relative performance droughts, where presumably the same empirical pattern of mean reversion in B/M measures is driven by worsening fundamentals, not price reactions to mispricing.

Also, to this point of small sample size — here are the results from 2002 – 2015 — the sample period in which the authors conducted their decomposition analysis.

2002 to 2015: A bad run for value

But what happens if we added one year? Say… 2016

Below are the summary stats on generic BM value and the S&P 500 for 2016.

And here is the table for 2002 through 2016.

Simple value is back in the performance lead.

Wow. What a difference a year makes, huh? FF_VAL crushed the S&P 500.

If we are looking at a 2002 to 2015 sample — 13 annual change observations — adding 1 more observation where the B/M strategy crushed the S&P 500 may completely reverse the conclusions from the initial decomposition analysis sample, which again is simply one year smaller. My hunch is most of this 2016 value performance gain is probably driven by price action in financials following the Trump election (not changes in year-over-year book values). If the authors ran their decomposition analysis over the 2015-2016 year they’d presumably find a negligible change in book value, but an epic change in price. Perhaps these observations would offset all the horrific observations likely seen in the 2008 Financial Crisis, when B/M ratio transitions for financials were likely driven by massive changes in year-over-year book value shifts

Who knows. The authors have the data and they could investigate these questions for us.

Bottom line: individual stocks experience extreme volatility and there is a lot of insanity in the stock market. Analysis of the sort conducted by the authors really needs to be taken with a healthy dose of salt and would require multiple decades of data to really generate any deeply robust insights. Not a knock on the authors, because they do a great analysis with the data that is available, but a broader point on how a longer-term analysis could significantly impact conclusions.

Another smaller point to consider:

Is 1-year really the period to assess market expectation revisions?

In the original LSV 1994 paper the authors look at 5-year changes in fundamentals. There is also an interesting discussion by David Dreman that looks at 5-yr changes to assess whether cheap stocks are cheap for a reason or they are cheap because the market is short-sighted. Value strategies are arguably slow-moving anomalies that often have limited turnover from year to year. Perhaps it takes 3 to 5 years for any mispricing in cheap stocks to be realized by market participants? We already know that the empirical evidence supports the notion that simple value strategies can be rebalanced over 1-, 3-, and even 5-year horizons and still show signs of strong expected performance. It would be nice to see this analysis, but the limited time sample would make the limited observation problem even more extreme. This kind of analysis is pretty much off the table with a sample this short.(14)

Section 3: The Interaction between Formulaic Value and Momentum

No real comments here. We already know that one can take systematic value portfolios, sort them on momentum, and generate better expected results. We also know that there are many ways to separate the winners from the losers among cheap value stocks. For example, there are any number of “quality” measures one could use, there is the classic “F-score,” and one can even use known mispricing factors to help identify winners and losers among the cheap stock buckets (we look at 11 of them in our tests of the enterprise multiple value factor).

Not sure what this section teaches us beyond what academic researchers have known for decades. And perhaps these additional signals are really just data-mining? I don’t know and I don’t think anybody really does.

Section 4: Quantifying the Benefits of a More Detailed Fundamental Analysis

This section asks an interesting question. What if we knew with perfect foresight where B/M ratios were going to be year from now? Not surprisingly, the results are strong due to the look ahead bias. (We saw the same effect when we analyzed God’s portfolio). One could earn a 36.1% annual hedge portfolio return, whereas a non-look ahead bias version of the analysis would generate 4.1% a year. The argument is that more sophisticated analysts could somehow access their crystal balls and ascertain ex-ante which value stocks are cheap for a reason and which value stocks are cheap due to irrational mispricing. Honestly, if there were security analysts with this capability they’d be multi-billionaires at this point, because compounding at 30%+ rates, or even 20%+ rates, is a mission (almost) impossible for any extended period of time.

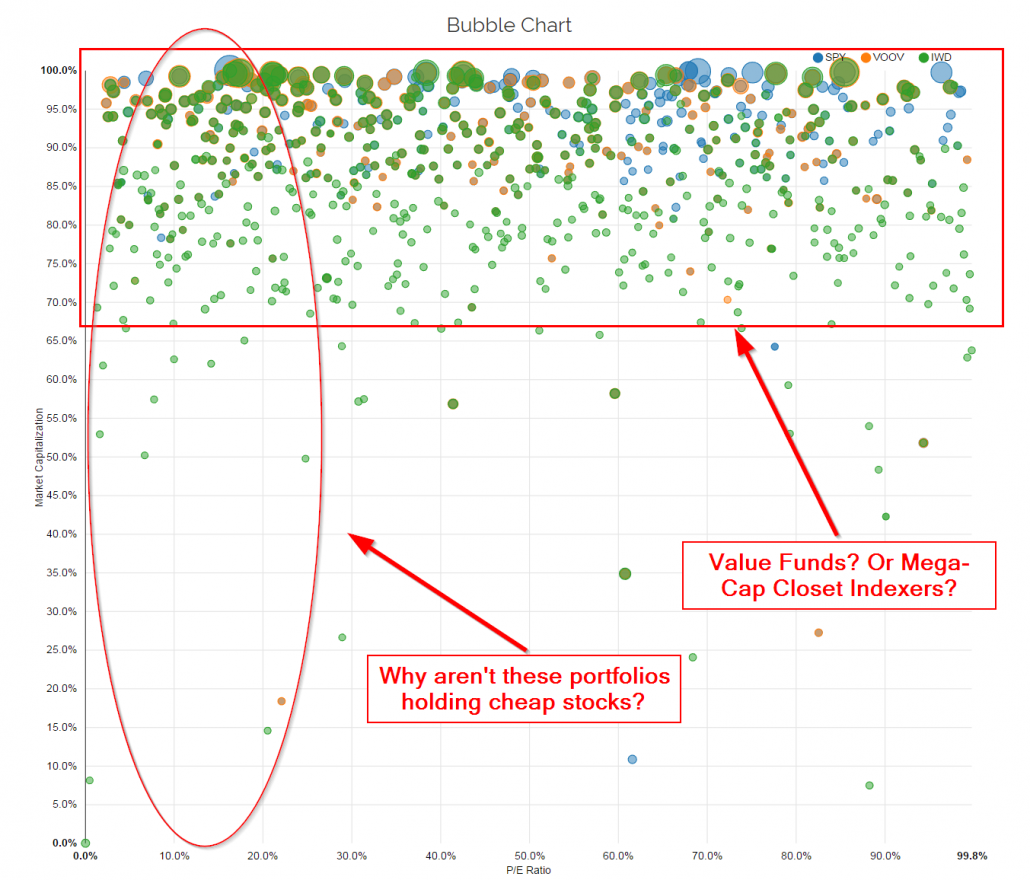

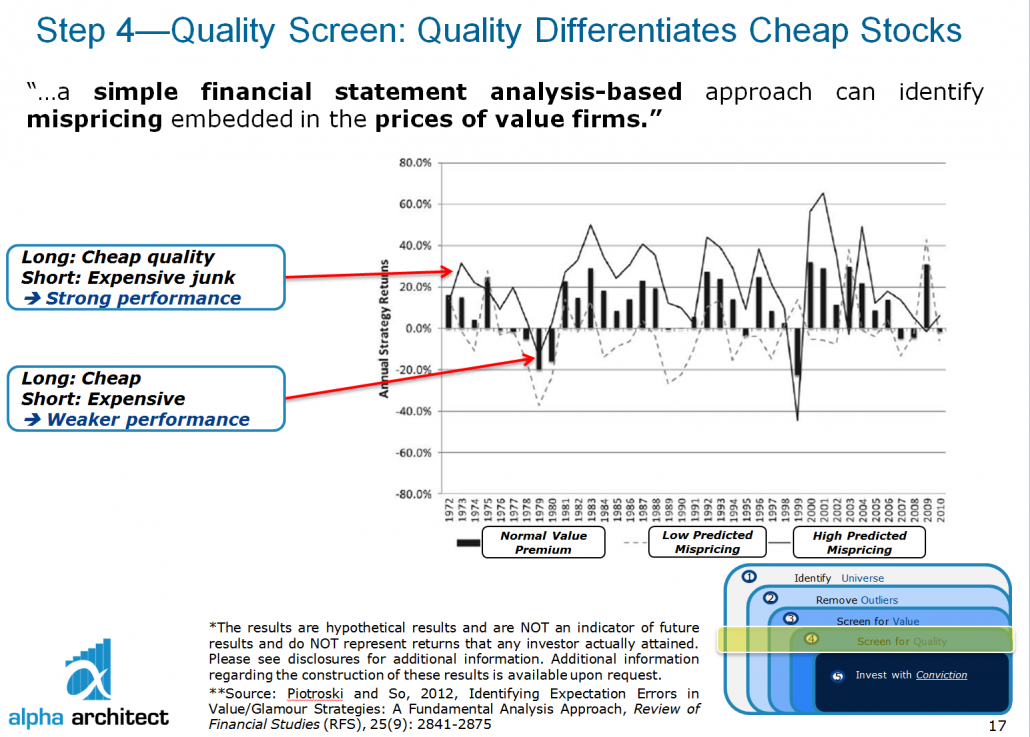

Moreover, we already know that there is substantial evidence that generic value investing algorithms can be improved by incorporating additional information regarding fundamental value. Piotroski and So 2012 do a formal study of this topic and find corroborating evidence with the key takeaway in this paper — simple value metrics can be substantially improved by trying to separate the cheapest stocks into those that are likely to be mispriced versus those that are likely to be high risk. No surprise to us, their technique for separating the winners from the losers among the cheapest stocks boils down to quality metrics. Here is a slide deck from a presentation on our internal systematic value program, which highlights a table from Piotroski and So.

Are quality metrics the only way one can separate the winners from the losers among the cheapest stocks? Not by a long shot. In our recent working paper we use 11 different “mispricing” techniques described in Stambaugh, Yu, and Yuan to ex-ante sort the cheapest value stocks into a “mispriced value” bucket and a “value for a reason” bucket. The results are summarized in the table below.

Basically all the “alpha” is associated with cheap stocks that can be ex-ante identified as being mispriced. Cheap stocks with no expected mispricing component are fairly priced, on average.

Does this mean that one can simply exploit the value premium in a much cleaner and lower risk way that is superior to simply buying a basket of the cheapest stocks and calling it a day? No way. ALL value strategies that attempt to exploit the value premium will necessarily test the stomach of even the most iron-willed investor on the planet earth — even the fanciest ones! There is no way around this truth. No pain, no gain. This is a fundamental aspect of a competitive market that is properly functioning. Sustainable alpha is sustainable for a reason — it sucks to hold onto these portfolios through thick and thin. Easy alpha is fleeting and always changing. Risk — be it true systematic risk or arbitrage-related risks — cannot be destroyed and someone has to pay the piper. Simple value — and even “sophisticated” value — work for a reason. They’re riskier in some form or fashion, even if that “risk” is not a risk that would fall out of a purely theoretical macroeconomic model that assumes rational expectations. Risk is often in the eyes of the beholder, not based on a mathematical model.

Another open question is whether a human security analyst can add more value to generic cheap stock screens versus the value an improved algorithm can add to a generic cheap stock screen. I think everyone knows where we stand on this question, but this is a debatable question. Perhaps a “quantamental” cyborg approach, or an approach that takes the output of a sophisticated algorithm, hands it to a human analyst, and lets the human fine-tune the portfolio can add marginal value?(15)

Jim Simons of Renaissance Technologies has a view on this. In 2010, he said:

Some investing firms say…they have models, and what they typically mean is…we have a model. It advises the trader what to do, and if he likes the advice he’ll take it and if he doesn’t like the advice he won’t take it. Well that’s…not science. You can’t simulate how you would do. How were you feeling when you got out of bed…thirteen years ago, when you’re looking at historical simulations. Did you like what the model said, or didn’t you like what the model said? It’s a hard thing to back-test.

So in the end, we’re skeptical about whether humans add value over algorithms (but we hope they keep trying to do so!). Nonetheless, this is the question we should all be asking. Not whether simplistic valuation metric-based investment approaches can be improved upon. We already know there are techniques to improve upon these strategies.

The real question is whether or not we need a human to conduct this analysis or can we use an algorithm to achieve the same — or better — end state. Fortunately, the question of whether human experts can reliably beat algorithms has already been addressed.

To quote Paul Meehl, an eminent scholar in the psychology field who summarizes the empirical evidence on whether or not models beat human experts:

There is no controversy in social science that shows such a large body of qualitatively diverse studies coming out so uniformly in the same direction as this one [models outperform experts].

Finally, Something We Can All Agree Upon

We’ve conducted a fairly detailed discussion of this paper. Our focus has been on sitting in the role of devil’s advocate to ensure that reader’s of this new paper busting on simple systematic value strategies consider broader opinions before dramatically shifting their opinions about the issue in one direction or the other. I want to be clear that I think this paper is interesting and the decomposition analysis is pretty cool. I also want to be clear that we agree with their final conclusion:

We caution against using this evidence to conclude that such strategies [simple systematic value strategies] can deliver healthy outperformance in the future.

Final thoughts on formulaic value investing

We believe that even the simplest systematic value strategy will likely earn higher expected returns than the broader market over the next 50 years, but it may not be “healthy” and it will surely be volatile as heck. We also believe that more sophisticated systematic value strategies will likely earn a marginal premium over the simplest value strategies over the next 50 years. But let’s not fool ourselves, the bulk of the excess expected returns that may be realized by these “sophisticated” value strategies will be driven by the fact that these portfolios will own cheap companies the world hates, not from the “sophistication.”

Let’s also be clear that we don’t believe that a human will need to be involved in any of these value investing processes to deliver the goods. The only role a human will play is in providing the algo with sell orders to match the cheap stock algo’s buy orders. And the human investors will do this because 1) they can’t handle the excess risk, and 2) these humans can’t handle the mental stress of living with a cheap-stock portfolio.

Thanks for reading and we look forward to continuing a discussion that will likely never have a “right” answer. We just enjoy partaking in the debate.

Facts About Formulaic Value Investing

- U-Wen Kok, Jason Ribando, and Richard Sloan

- A version of the paper can be found here.

Abstract:

The term ‘value investing’ is increasingly being adopted by quantitative investment strategies that use ratios of common fundamental metrics (e.g., book value, earnings) to market price. A hallmark of such strategies is that they do not involve a comprehensive effort to determine the intrinsic value of the underlying securities. We document two facts about such strategies. First, there is little compelling evidence that such strategies deliver superior investment performance for U.S. equities. Second, instead of identifying undervalued securities, these strategies systematically identify firms with temporarily inflated accounting numbers. We argue that these strategies should not be confused with value strategies that employ a comprehensive approach to determine the intrinsic value of the underlying securities.

References[+]

| ↑1 | Here is another article tackling the man versus machine argument. |

|---|---|

| ↑2 | I’ll go knock on wood now… |

| ↑3 | Graham, Benjamin. “A Conversation with Benjamin Graham.” Financial Analysts Journal, Vol. 32, No. 5 (1976), pp. 20–23. This article was first brought to our attention by Charles Mizrahi. |

| ↑4 | Chart based on P/B: |

| ↑5 | See https://activeshare.info/fund/dfa-investment-dimensions-group-inc-ta-us-core-equity-2-portfolio for a look at DFA active share. |

| ↑6 | If investors do these sort of allocations, they are stuffed in the “alternative bucket,” which are often delivered via tax-inefficient and expensive product wrappers. Additionally, much of this “alpha” is unobtainable in the real world, especially on the short side, due to various constraints, including bid/ask spreads, liquidity, ability to locate and borrow shares, rebates, etc. So even if there were any alpha it would be tough to extract after factoring in such constraints as fees, taxes, and frictional costs. Not impossible, but challenging. Moreover, many investors don’t really appreciate how these allocations are supposed to work in their portfolios (true diversifiers) and they often get rid of them when they underperform the long-only benchmarks. Again, now suggesting that L/S is evil, bad, or impossible, just suggesting that this form of investing is somewhat unrealistic for the vast majority of the investing public. |

| ↑7 | The record of DFA also shows that simple value-based strategies have been effective. |

| ↑8 | Perhaps Jack Bogle and his friends should look at the epic flows of capital (magnitudes more than almost all other “styles”) into this systematic factor fund with great recent performance called the S&P 500, which factor tilts towards large-caps, quality, and targets a beta of 1. Can we use the same logic used on the value premium to suggest we are on the verge of arbitraging away the beta premium? Would I bet that beta will pay off no premium over the next 50 years? Heck no. But we can definitely predict that the realized returns on the beta premium (and size/quality factors) are probably going to be volatile in the future — especially given the lemming-like mob scene attracted to this particular factor fund! |

| ↑9 | AQR has a cool paper on these ideas here |

| ↑10 | This isn’t a statement related to large/mega-cap value based on B/M, where there is stronger evidence that there isn’t much of an effect. |

| ↑11 | DFA have moved away from simple B/M (i.e., gross profitability), but their core process has been built around this signal, as well as size. |

| ↑12 | And of course there are also issues surrounding how to measure HML in the first place. Some would argue that the way Fama and French measure HML handicaps the value premium. A good example of this is the research on the HML “devil” factor described by the team at AQR. |

| ↑13 | Chris Meredith has a great takedown of B/M here. |

| ↑14 | Seems to |

| ↑15 | Seems to work in chess? |

About the Author: Wesley Gray, PhD

—

Important Disclosures

For informational and educational purposes only and should not be construed as specific investment, accounting, legal, or tax advice. Certain information is deemed to be reliable, but its accuracy and completeness cannot be guaranteed. Third party information may become outdated or otherwise superseded without notice. Neither the Securities and Exchange Commission (SEC) nor any other federal or state agency has approved, determined the accuracy, or confirmed the adequacy of this article.

The views and opinions expressed herein are those of the author and do not necessarily reflect the views of Alpha Architect, its affiliates or its employees. Our full disclosures are available here. Definitions of common statistics used in our analysis are available here (towards the bottom).

Join thousands of other readers and subscribe to our blog.

https://alphaarchitect.com/wp-content/uploads/2017/04/spy-voov-iwd-value-fund.png 1345w" sizes="(max-width: 1030px) 100vw, 1030px" />

https://alphaarchitect.com/wp-content/uploads/2017/04/spy-voov-iwd-value-fund.png 1345w" sizes="(max-width: 1030px) 100vw, 1030px" />