Documentation of the File Drawer Problem in Academic Finance Journals

- Matthew R. Morey and Sonu Yadav

- Journal of Investment Management

- A version of this paper can be found here

- Want to read our summaries of academic finance papers? Check out our Academic Research Insight category

What are the research questions?

Coined by Rosenthal in 1979, the term file drawer problem refers to the notion that journal editors are biased toward accepting articles that include statistically significant results over those with nonsignificant results. The competition for increasing the citation count and improving journal impact numbers is considerable and primarily driven by the fact that articles with significant results are more likely to be cited. As a result, the research report that lacks significant results is likely to be deposited in a file drawer, forever hidden from the light of day, rather than submitted for publication. (see here and here for in-depth coverage on this topic).

This paper addresses the following questions:

- Is there a significant file drawer problem in financial journals?

- Does the file drawer problem extend to lower ranked finance journals?

- Are there any solutions to reduce the file drawer problem?

What are the Academic Insights?

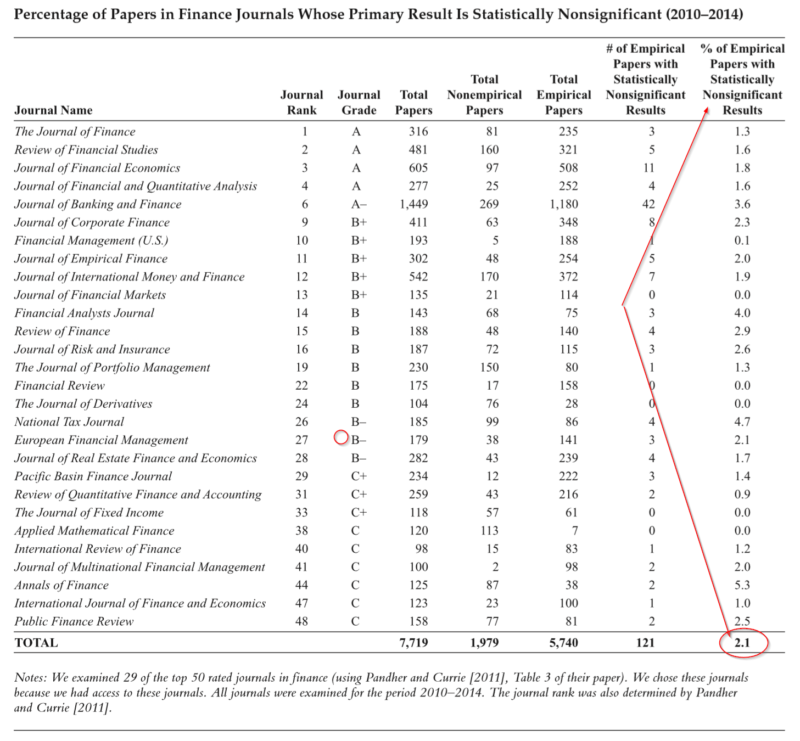

- YES. Although the problem is well known in scientific, medical and social sciences, it has only begun to be recognized in finance. The authors of this study report just over 2% of 29 finance journals examined over a recent 5 year period, have statistically nonsignificant results. Five of the 29 journals reported NO studies with nonsignificant results, over the 5 year period.

- YES. The file drawer problem was just as bad, perhaps even worse, for lower ranked journals. Journals ranked C+ or lower published articles with nonsignificant results only 1.4% of the time.

- YES. Eliminate the practice of p-hacking. Apply stricter standards such as using a p-value of 0.005 instead of the current standard of .05, thus improving replicability and reducing the incidence of false positives. Given that fewer studies would reach this higher standard, more would be published without significant results.

Why does it matter?

The authors discuss two problems with the file drawer problem:

- Since journals publish a biased sample of articles, readers are exposed to biased results in the discipline of finance; and

- The motivation to manipulate, cherry-pick and otherwise p-hack the results is fueled by requirements imposed by tenure and promotion, conferences, research grants and journals with relatively few costs. The likelihood of replication of any one study is very low.

A quote from the authors:

Indeed, Harvey, Liu, and Zhu [2016] argued that because of the problems of p-hacking and other issues, “most claimed research findings in financial economics are likely false” .

UGH.

The most important chart from the paper

Abstract

The file drawer problem is a publication bias wherein editors of journals are much more likely to accept empirical papers with statistically significant results than those with nonsignificant results. As a result, papers that have nonsignificant results are not published and are relegated to the file drawer, never to be seen by others. In this article, the authors examine the prevalence of the file drawer problem in finance journals. To do this, they examine 29 finance journals for five years (2010–2014). These journals include A-ranked journals as well as B- and C-ranked journals. In an examination of over 5,740 empirical papers, they found that only 121, or 2.1% of the papers, had statistically nonsignificant results. Indeed, for some journals, there was not a single article that had nonsignificant results. Furthermore, their findings indicate that the file drawer problem is just as bad, if not worse, in lower-ranked journals compared with top-ranked journals. The percentage of papers with nonsignificant results is actually somewhat smaller in B- and C-ranked journals than in A-ranked journals. These results suggest that there is a publication bias in finance journals.

About the Author: Tommi Johnsen, PhD

—

Important Disclosures

For informational and educational purposes only and should not be construed as specific investment, accounting, legal, or tax advice. Certain information is deemed to be reliable, but its accuracy and completeness cannot be guaranteed. Third party information may become outdated or otherwise superseded without notice. Neither the Securities and Exchange Commission (SEC) nor any other federal or state agency has approved, determined the accuracy, or confirmed the adequacy of this article.

The views and opinions expressed herein are those of the author and do not necessarily reflect the views of Alpha Architect, its affiliates or its employees. Our full disclosures are available here. Definitions of common statistics used in our analysis are available here (towards the bottom).

Join thousands of other readers and subscribe to our blog.